ML development and deployment today suffer from fragmented and siloed infrastructure that can differ by framework, hardware, and use case. Such fragmentation restrains developer velocity and imposes barriers to model portability, efficiency, and productionization.

Today, we’re taking a significant step towards eliminating these barriers by making the OpenXLA Project, including the XLA, StableHLO, and IREE repositories, available for use and contribution.

OpenXLA is an open source ML compiler ecosystem co-developed by AI/ML industry leaders including Alibaba, Amazon Web Services, AMD, Anyscale, Apple, Arm, Cerebras, Google, Graphcore, Hugging Face, Intel, Meta, NVIDIA and SiFive. It enables developers to compile and optimize models from all leading ML frameworks for efficient training and serving on a wide variety of hardware. Developers using OpenXLA will see significant improvements in training time, throughput, serving latency, and, ultimately, time-to-market and compute costs.

Start accelerating your workloads with OpenXLA on GitHub.

Development teams across numerous industries are using ML to tackle complex real-world challenges, such as prediction and prevention of disease, personalized learning experiences, and black hole physics.

As model parameter counts grow exponentially and compute for deep learning models doubles every six months, developers seek maximum performance and utilization of their infrastructure. Teams are leveraging a wider array of hardware from power-efficient ML ASICs in the datacenter to edge processors that can deliver more responsive AI experiences. These hardware devices have bespoke software libraries with unique algorithms and primitives.

However, without a common compiler to bridge these diverse hardware devices to the multiple frameworks in use today (e.g. TensorFlow, PyTorch), significant effort is required to run ML efficiently; developers must manually optimize model operations for each hardware target. This means using bespoke software libraries or writing device-specific code, which requires domain expertise. The result is isolated, non-generalizable paths across frameworks and hardware that are costly to maintain, promote vendor lock-in, and slow progress for ML developers.

Our Solution and Goals

The OpenXLA Project provides a state-of-the-art ML compiler that can scale amidst the complexity of ML infrastructure. Its core pillars are performance, scalability, portability, flexibility, and extensibility for users. With OpenXLA, we aspire to realize the real-world potential of AI by accelerating its development and delivery.

Our goals are to:

- Make it easy for developers to compile and optimize any model in their preferred framework, for a wide range of hardware through (1) a unified compiler API that any framework can target (2) pluggable device-specific back-ends and optimizations.

- Deliver industry-leading performance for current and emerging models that (1) scales across multiple hosts and accelerators (2) satisfies the constraints of edge deployments (3) generalizes to novel model architectures of the future.

- Build a layered and extensible ML compiler platform that provides developers with (1) MLIR-based components that are reconfigurable for their unique use cases (2) plug-in points for hardware-specific customization of the compilation flow.

A Community of AI/ML Leaders

The challenges we face in ML infrastructure today are immense and no single organization can effectively resolve them alone. The OpenXLA community brings together developers and industry leaders operating at different levels of the AI stack, from frameworks to compilers, runtimes, and silicon, and is thus well suited to address the fragmentation we see across the ML landscape.

As an open source project, we’re guided by the following

set of principles:

- Equal footing: Individuals contribute on equal footing regardless of their affiliation. Technical leaders are those who contribute the most time and energy.

- Culture of respect: All members are expected to uphold project values and code of conduct, regardless of their position in the community.

- Scalable, efficient governance: Small groups make consensus-based decisions, with clear but rarely-used paths for escalation.

- Transparency: All decisions and rationale should be legible to the public community.

Performance, Scale, and Portability: Leveraging the OpenXLA Ecosystem

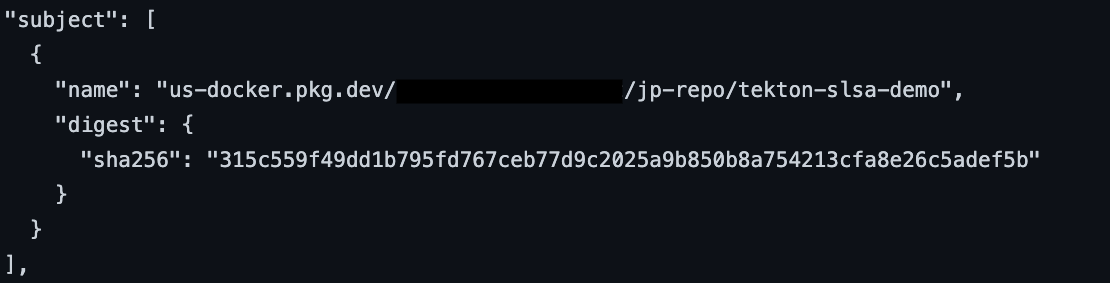

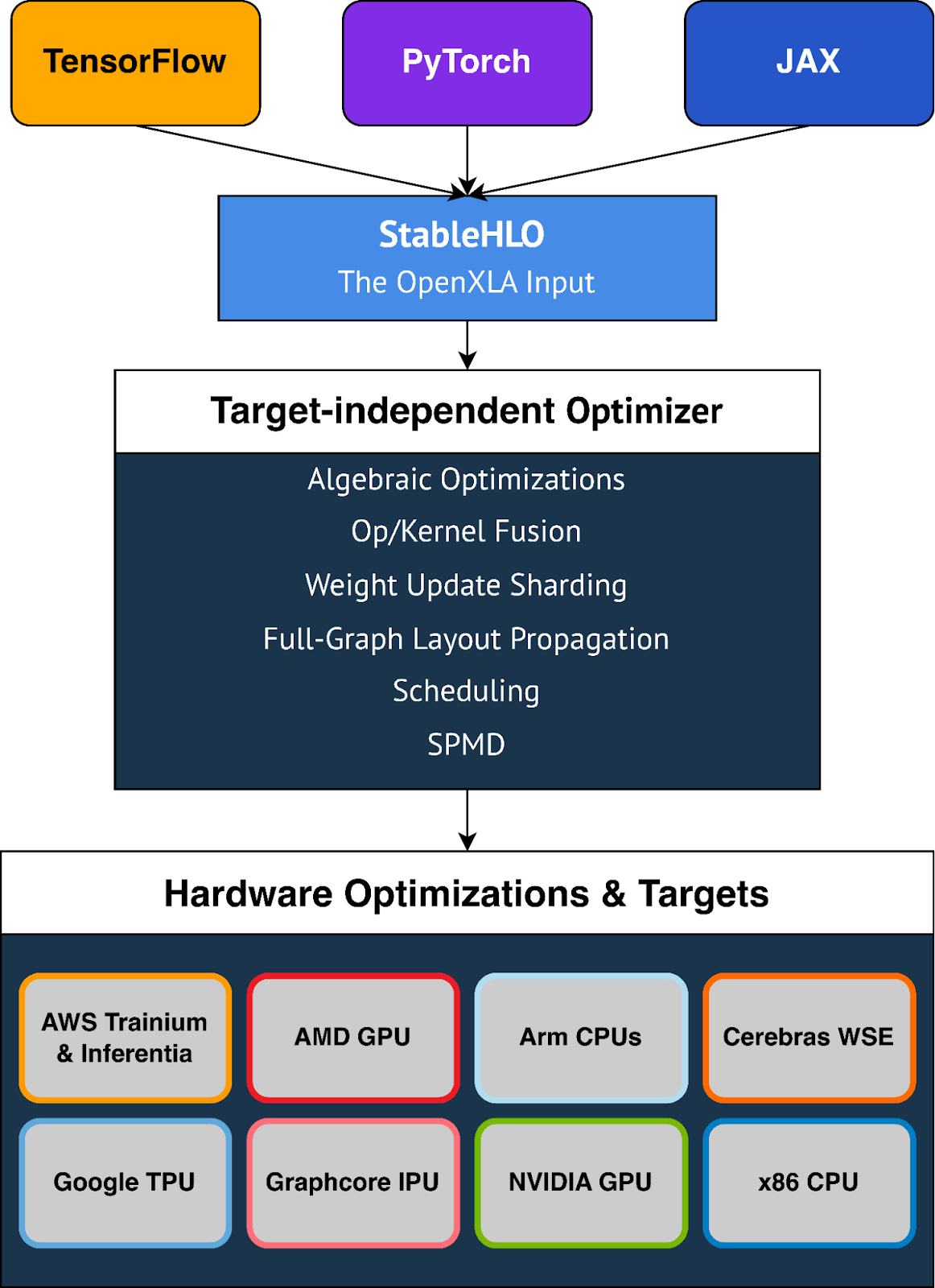

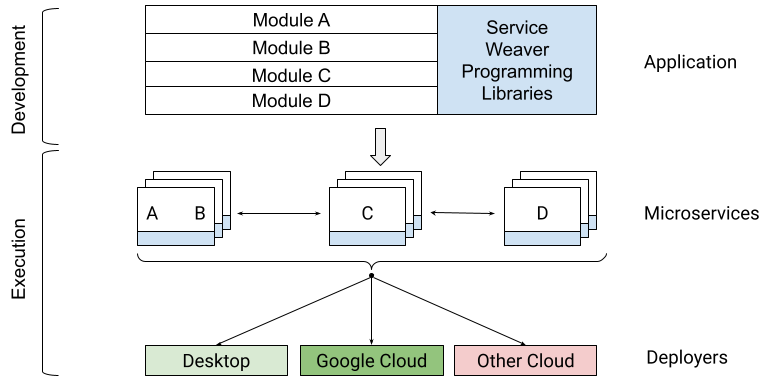

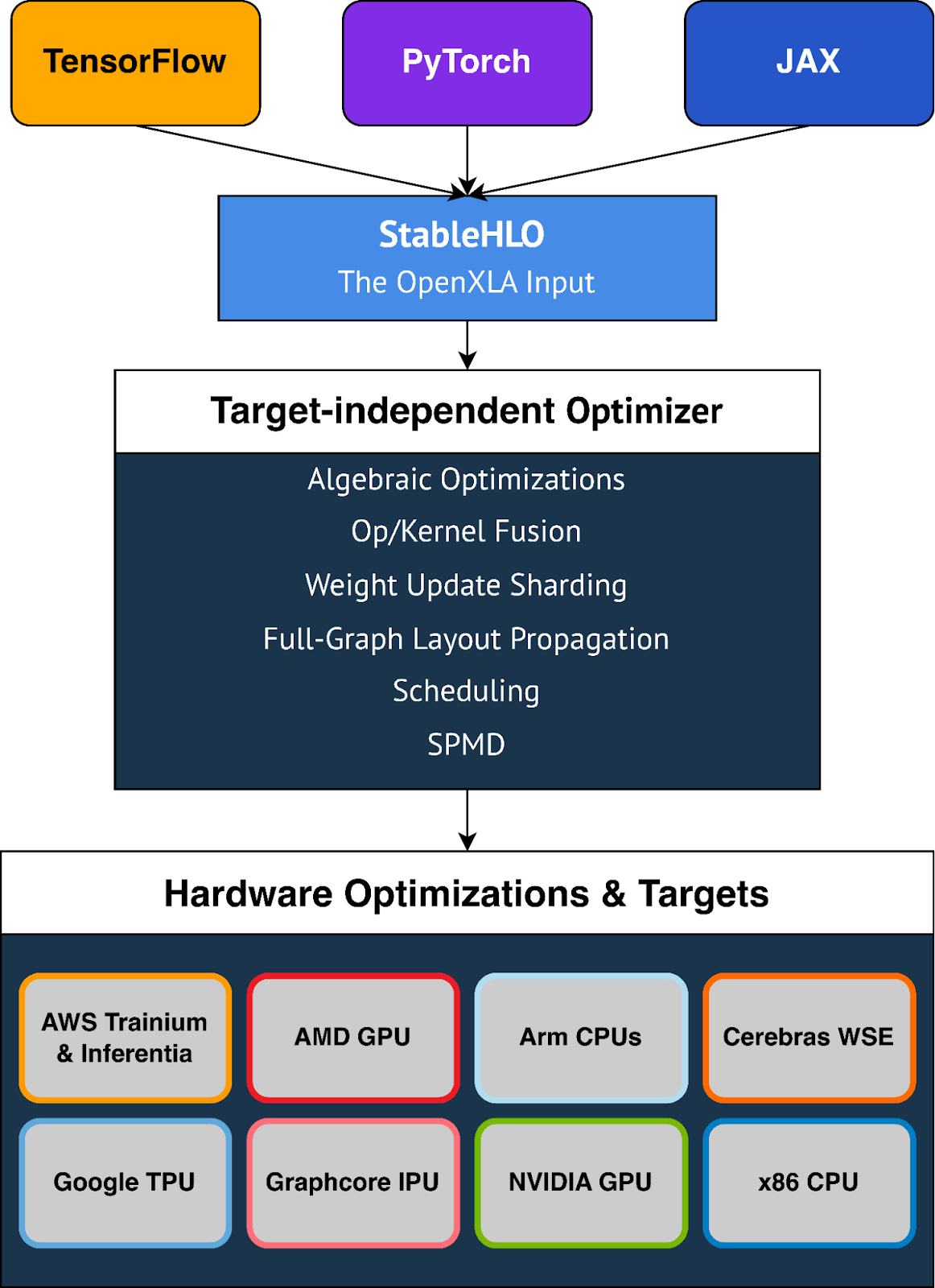

OpenXLA eliminates barriers for ML developers via a modular toolchain that is supported by all leading frameworks through a common compiler interface, leverages standardized model representations that are portable, and provides a domain-specific compiler with powerful target-independent and hardware-specific optimizations. This toolchain includes XLA, StableHLO, and IREE, all of which leverage

MLIR: a compiler infrastructure that enables machine learning models to be consistently represented, optimized and executed on hardware.

|

| High-level OpenXLA compilation flow and architecture. Depicted optimizations, frameworks and hardware targets represent a select portion of what is available to developers through OpenXLA. |

Here are some of the key benefits that OpenXLA provides:

Spectrum of ML Use Cases

Usage of OpenXLA today spans the gamut of ML use cases. This includes full-scale training of models like DeepMind’s AlphaFold, GPT2 and Swin Transformer on Alibaba Cloud, and multi-modal LLMs for Amazon.com. Users like Waymo leverage OpenXLA for on-vehicle, real-time inference. In addition, OpenXLA is being used to optimize serving of Stable Diffusion on AMD RDNA™ 3-equipped local machines.

Optimal Performance, Out of the Box

OpenXLA makes it easy for developers to speed up model performance without needing to write device-specific code. It features whole-model optimizations including simplification of algebraic expressions, optimization of in-memory data layout, and improved scheduling for reduced peak memory use and communication overhead. Advanced operator fusion and kernel generation help improve device utilization and reduce memory bandwidth requirements.

Scale Workloads With Minimal Effort

Developing efficient parallelization algorithms is time-consuming and requires expertise. With features like GSPMD, developers only need to annotate a subset of critical tensors that the compiler can then use to automatically generate a parallelized computation. This removes much of the work required to partition and efficiently parallelize models across multiple hardware hosts and accelerators.

OpenXLA provides out-of-the-box support for a multitude of hardware devices including AMD and NVIDIA GPUs, x86 CPU and Arm architectures, as well as ML accelerators like Google TPUs, AWS Trainium and Inferentia, Graphcore IPUs, Cerebras Wafer-Scale Engine, and many more. OpenXLA additionally supports TensorFlow, PyTorch, and JAX via StableHLO, a portability layer that serves as OpenXLA's input format.

Flexibility

OpenXLA gives users the flexibility to manually tune hotspots in their models. Extension mechanisms such as Custom-call enable users to write deep learning primitives with CUDA, HIP, SYCL, Triton and other kernel languages so they can take full advantage of hardware features.

StableHLO

StableHLO, a portability layer between ML frameworks and ML compilers, is an operation set for high-level operations (HLO) that supports dynamism, quantization, and sparsity. Furthermore, it can be serialized into MLIR bytecode to provide compatibility guarantees. All major ML frameworks (JAX, PyTorch, TensorFlow) can produce StableHLO. Through 2023, we plan to collaborate closely with the PyTorch team to enable an integration to the recent PyTorch 2.0 release.

We’re excited for developers to get their hands on these features and many more that will significantly accelerate and simplify their ML workflows.

Moving Forward Together

The OpenXLA Project is being built by a collaborative community, and we're excited to help developers extend and use it to address the gaps and opportunities we see in the ML industry today. Get started with OpenXLA today on

GitHub and sign up for our mailing list

here for product and community announcements. You can follow us on Twitter:

@OpenXLA

Here’s what our collaborators are saying about OpenXLA:

Alibaba

“At Alibaba, OpenXLA is leveraged by Elastic GPU Service customers for training and serving of large PyTorch models. We’ve seen significant performance improvements for customers using OpenXLA, notably speed-ups of 72% for GPT2 and 88% for Swin Transformer on NVIDIA GPUs. We're proud to be a founding member of the OpenXLA Project and work with the open-source community to develop an advanced ML compiler that delivers superior performance and user experience for Alibaba Cloud customers.” – Yangqing Jia, VP, AI and Data Analytics, Alibaba

AWS

“We're excited to be a founding member of the OpenXLA Project, which will democratize access to performant, scalable, and extensible AI infrastructure as well as further collaboration within the open source community to drive innovation. At AWS, our customers scale their generative AI applications on AWS Trainium and Inferentia and our Neuron SDK relies on XLA to optimize ML models for high performance and best in class performance per watt. With a robust OpenXLA ecosystem, developers can continue innovating and delivering great performance with a sustainable ML infrastructure, and know that their code is portable to use on their choice of hardware.” –

Nafea Bshara, Vice President and Distinguished Engineer, AWS

AMD

“We are excited about the future direction of OpenXLA on the broad family of AMD devices (CPUs, GPUs, AIE) and are proud to be part of this community. We value projects with open governance, flexible and broad applicability, cutting edge features and top-notch performance and are looking forward to the continued collaboration to expand open source ecosystem for ML developers.” –

Alan Lee, Corporate Vice President, Software Development, AMD

Anyscale

"Anyscale develops open and scalable technologies like Ray to help AI practitioners develop their applications faster and make them available to more users. Recently we partnered with the ALPA project to use OpenXLA to show high-performance model training for Large Language models at scale. We are glad to participate in OpenXLA and excited how this open source effort enables running AI workloads on a wider variety of hardware platforms efficiently, thereby lowering the barrier of entry, reducing costs and advancing the field of AI faster." –

Philipp Moritz, CTO, Anyscale

Arm

“The OpenXLA Project marks an important milestone on the path to simplifying ML software development. We are fully supportive of the OpenXLA mission and look forward to leveraging the OpenXLA stability and standardization across the Arm® Neoverse™ hardware and software roadmaps.” –

Peter Greenhalgh, vice president of technology and fellow, Arm.

Cerebras

“At Cerebras, we build AI accelerators that are designed to make training even the largest AI models quick and easy. Our systems and software meet users where they are -- enabling rapid development, scaling, and iteration using standard ML frameworks without change. OpenXLA helps extend our user reach and accelerated time to solution by providing the Cerebras Wafer-Scale Engine with a common interface to higher level ML frameworks. We are tremendously excited to see the OpenXLA ecosystem available for even broader community engagement, contribution, and use on GitHub.” –

Andy Hock, VP and Head of Product, Cerebras Systems

Google

“Open-source software gives everyone the opportunity to help create breakthroughs in AI. At Google, we’re collaborating on the OpenXLA Project to further our commitment to open source and foster adoption of AI tooling that raises the standard for ML performance, addresses incompatibilities between frameworks and hardware, and is reconfigurable to address developers’ tailored use cases. We’re excited to develop these tools with the OpenXLA community so that developers can drive advancements across many different layers of the AI stack.” –

Jeff Dean, Chief Scientist, Google DeepMind and Google Research

Graphcore

“Our IPU compiler pipeline has used XLA since it was made public. Thanks to XLA's platform independence and stability, it provides an ideal frontend for bringing up novel silicon. XLA’s flexibility has allowed us to expose our IPU’s novel hardware features and achieve state of the art performance with multiple frameworks. Millions of queries a day are served by systems running code compiled by XLA. We are excited by the direction of OpenXLA and hope to continue contributing to the open source project. We believe that it will form a core component in the future of AI/ML.” –

David Norman, Director of Software Design, Graphcore

Hugging Face

“Making it easy to run any model efficiently on any hardware is a deep technical challenge, and an important goal for our mission to democratize good machine learning. At Hugging Face, we enabled XLA for TensorFlow text generation models and achieved speed-ups of ~100x. Moreover, we collaborate closely with engineering teams at Intel, AWS, Habana, Graphcore, AMD, Qualcomm and Google, building open source bridges between frameworks and each silicon, to offer out of the box efficiency to end users through our Optimum library. OpenXLA promises standardized building blocks upon which we can build much needed interoperability, and we can't wait to follow and contribute!” –

Morgan Funtowicz, Head of Machine Learning Optimization, Hugging Face

Intel

“At Intel, we believe in open, democratized access to AI. Intel CPUs, GPUs, Habana Gaudi accelerators, and oneAPI-powered AI software including OpenVINO, drive ML workloads everywhere from exascale supercomputers to major cloud deployments. Together with other OpenXLA members, we seek to support standards-based, componentized ML compiler tools that drive innovation across multiple frameworks and hardware environments to accelerate world-changing science and research.” –

Greg Lavender, Intel SVP, CTO & GM of Software & Advanced Technology Group

Meta

“In research, at Meta AI, we have been using XLA, a core technology of the OpenXLA project, to enable PyTorch models for Cloud TPUs and were able to achieve significant performance improvements on important projects. We believe that open source accelerates the pace of innovation in the world, and are excited to be a part of the OpenXLA Project.” –

Soumith Chintala, Lead Maintainer, PyTorch

NVIDIA

“As a founding member of the OpenXLA Project, NVIDIA is looking forward to collaborating on AI/ML advancements with the OpenXLA community and are positive that with wider engagement and adoption of OpenXLA, ML developers will be empowered with state-of-the-art AI infrastructure.” –

Roger Bringmann, VP, Compiler Software, NVIDIA.

Acknowledgements

Abhishek Ratna, Allen Hutchison, Aman Verma, Amber Huffman, Andrew Leaver, Ashok Bhat, Chalana Bezawada, Chandan Damannagari, Chris Leary, Christian Sigg, Cormac Brick, David Dunleavy, David Huntsperger, David Majnemer, Elisa Garcia Anzano, Elizabeth Howard, Gadi Hutt, Geeta Chauhan, Geoffrey Martin-Noble, George Karpenkov, Ian Chan, Jacinda Mein, Jacques Pienaar, Jake Hall, Jake Harmon, Jason Furmanek, Julian Walker, Kulin Seth, Kanglan Tang, Kuy Mainwaring, Mahesh Balasubramanian, Michael Hudgins, Milad Mohammadi, Paul Baumstarck, Peter Hawkins, Puneith Kaul, Rich Heaton, Robert Hundt, Roman Dzhabarov, Rostam Dinyari, Scott Kulchycki, Scott Main, Scott Todd, Shantu Roy, Shauheen Zahirazami, Stella Laurenzo, Stephan Herhut, Tomás Longeri, Tres Popp, Vartika Singh, Vinod Grover, Will Constable, and Zac Mustin.

Founding Team

Eugene Burmako, James Rubin, Magnus Hyttsten, Mehdi Amini, Navid Khajouei, and Thea Lamkin

By James Rubin, Product Manager, Machine Learning